Article By Regina Morrison

Angela Lipps spent nearly six months in jail because an algorithm looked at surveillance footage and decided she matched the suspect. She had never been to North Dakota. She had never been on a plane. A facial recognition system said otherwise, and police took that as enough.

Lipps, a 50-year-old mother and grandmother from north-central Tennessee, was arrested at her home in July while babysitting four children. US marshals arrived with guns drawn. She was booked as a fugitive from justice.

“I’ve never been to North Dakota, I don’t know anyone from North Dakota,” she told WDAY News.

The case began with bank fraud in Fargo.

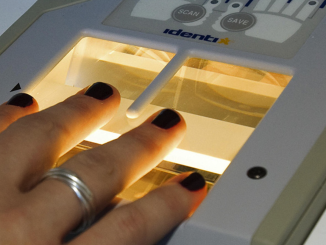

Between April and May 2025, someone used a fake US Army military ID to withdraw tens of thousands of dollars from banks across the city. Detectives pulled surveillance footage of a woman at the counters. They fed that footage into facial recognition software. The software returned a name: Angela Lipps.

A detective wrote in court documents that Lipps appeared to match the suspect based on facial features, body type, and hairstyle.

That assessment, made by software and rubber-stamped in a report, was treated as sufficient cause for arrest. Nobody from the Fargo police called Lipps before the marshals showed up at her door.

She sat in a Tennessee county jail for 108 days waiting for North Dakota to arrange her transport. No bail. Four counts of unauthorized use of personal identifying information. Four counts of theft. The algorithm had spoken.

Her attorney, Jay Greenwood, told InForum: “If the only thing you have is facial recognition, I might want to dig a little deeper.”

Fargo police did not dig deeper. What eventually cleared Lipps was her bank records, which showed she had been more than 1,200 miles away in Tennessee during every transaction investigators said she committed in North Dakota. Greenwood obtained those records and brought them to the investigators. Lipps was released on Christmas Eve.

The story didn’t end there. While locked up and unable to pay bills, Lipps lost her home, her car, and her dog. When Fargo police released her, they didn’t arrange her trip back to Tennessee. Defense attorneys helped cover a hotel room and food over Christmas. A local nonprofit, the F5 Project, got her home.

As of the reporting from InForum, nobody from the Fargo police department had apologized.

This is how facial recognition operates: it generates a match, law enforcement acts on it, and the burden of disproving a computer’s guess falls entirely on the person whose life gets upended.

Lipps had to produce documentary evidence of her own location to escape charges based on software that was simply wrong.

The Lipps case is not unusual. Last October, an AI system at a Baltimore school identified a bag of Doritos as a firearm and notified police.

Officers arrived armed at Kenwood High School, forced student Taki Allen to his knees, handcuffed him, and searched him. They found nothing.

In the UK, Shaun Thompson, 39, had just finished a volunteer shift with Street Fathers, a group dedicated to steering young people away from knife crime, when the Metropolitan Police’s live facial recognition cameras flagged him outside London Bridge station.

Officers detained him for nearly half an hour, demanded his fingerprints, and threatened arrest, even as he produced multiple forms of ID proving he wasn’t the person they were looking for. “They were telling me I was a wanted man, trying to get my fingerprints and trying to scare me with arrest, even though I knew and they knew the computer had got it wrong,” he said.

Thompson is now bringing the first legal challenge of its kind against the Metropolitan Police’s use of live facial recognition. The man the algorithm flagged as a criminal was spending his evening trying to prevent crime. The technology made no distinction.

What these cases share is a common architecture. A system makes an identification, human oversight treats that identification as reliable, and the person flagged has no recourse until significant damage has already been done.

Be the first to comment